Imagine you are going about your day as usual and suddenly a friend sends you a very realistic video of you (with your full name present) in a pornographic film you did not take part in or gave consent to. How would you feel? Shocked, embarrassed, scared, angry, and in disbelief? There may not be words to describe your feelings and the psychological turmoil you go through. Especially once you find out there is not much you can do about this while the creator of this video walks free and continues to create and distribute more false media like this. This ‘scary’ technology that can transform our data into a nightmare, is called synthetic media (or deepfakes) where images, video, and recording are altered.

Researchers and the media have been expressing concerns about the risks of AI such as data misuse and privacy violations, and the existence and misuse of deepfakes are some of their biggest concerns. Deepfakes can seriously damage anyone’s life and credibility, compromising democracy and sense of reality. No one is safe from this technology as people lose control over the use of their face and this could lead to disastrous consequences.

While Artificial Intelligent(AI)-based tools are said to bring accessible solutions to all, we live in constant fear of cyberattacks and do not fully trust these technologies yet. Particularly, after seeing cases like the data leakage scandal by Facebook and Cambridge Analytica, people have started to question how their data is used by tech companies. At present, the use and advancement of AI algorithms do not go hand-in-hand with data security. Since AI and Machine Learning (ML) need a lot of data for it to work, AI developers obtain data from any source they can find. This could be from social media, your google account history, surveillance cameras, and many others.

You might be thinking this only happens to famous people, such as celebrities and public figures, but you are sadly mistaken. Today, even with a minimal presence on social media, i.e., only a few pictures and/or videos present, anyone could create a deepfake of you. For example, a pornographic deepfake of a law graduate in Australia was made without her consent, and she only got to know about this when a friend informed her about it. In fact, 96% of deepfakes are pornographic and almost all victims are women.

While this may be the case at present times, deepfakes have the potential to cause a lot more damage. This synthetic technology, in the wrong hands, can pose a very real national security threat, could impact elections worldwide, and be a major threat to democracy. A solution to combat the misuse of deepfakes is to use AI ‘against’ AI to identify deepfakes by using AI algorithms to detect deepfake content from the real ones. Once detection becomes easier, it should be possible to persecute those who misused such technology for vicious purposes.

What are Deepfakes and how are they generated?

Deepfakes use AI, in particular, machine and deep learning to make images, videos and, recordings of events that never happened to manipulate emotions.

The results are impressive and can be very realistic. To see how realistic the images generated by this technology can turn out, one can visit the ThisPersonDoesNotExist.com website, which displays good examples of how these algorithms can generate endless fake faces. Every time the page is refreshed, a different person is generated and this happens almost immediately.

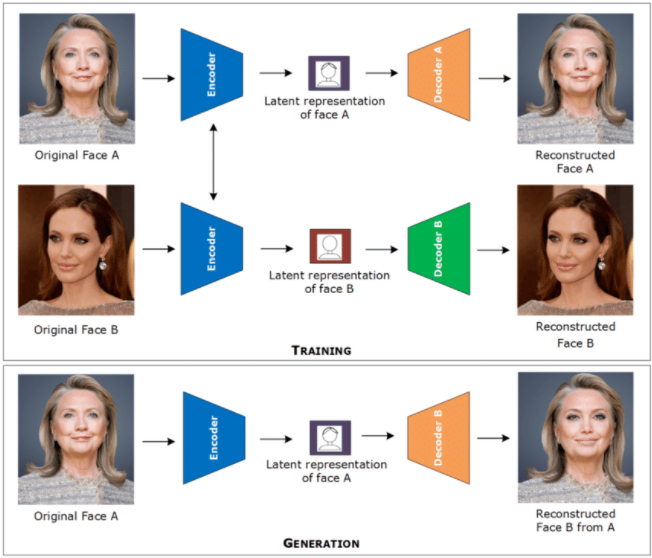

Generally deepfakes are trained and generated frame-by-frame before the results are combined together into a video clip. Different techniques are used to create synthetic media and are primarily based on autoencoders and generative adversarial networks (GAN).

When using autoencoders, two networks use the same encoder but different decoders for the training process. Then, in the generation process, an image of face A is encoded with the common encoder and decoded with decoder B to create a deepfake.

On the other hand, the GAN method works with two neural network generators and discriminators that compete against each other. The generator produces a new image as an output based on the knowledge that the neural network has been taught. The discriminator determines whether the image is real or fake. This enables the model to learn in an unsupervised way and the result becomes better and better but at the same time this can results in harder detection.

This technology of synthetic media has huge potential in the film industry from bringing back deceased actors and actresses to creating more realistic scenes in movies at a very low cost. It can also be used for educational purposes. Let’s imagine being able to have a deepfake of Albert Einstein teaching us physics. This would make the lecture much more interesting for the students.

What are the risks and consequences?

At the same time, this technology can be seriously dangerous in spreading fake news, scamming, and data privacy issues, putting our democracy in danger and also ruining anyone’s credibility.

If you look up ‘deepfakes’ on Youtube, you will be impressed by the quality of the results and the number of tutorials that show you how to create a fake video in a few minutes. Famous politicians like Obama, Queen Elizabeth, and Trump, have been targeted as a large amount of recorded material of them is readily available. Public figures like them often stand straight to the camera so it’s easier to manipulate their images and videos.

In a recent incident, many journalists and even the FBI in the United States, have warned the public about how Russia and China use deepfakes to spread false news with the intent to interfere in their influence campaigns. With deepfakes, you could have false information circulating about elections and political figures that could influence public opinion. Furthermore, it could also be used to falsely accuse any innocent citizen of a crime they did not commit or help a dangerous criminal escape prosecution by claiming the evidence against the criminal is fake.

For example, the citizens of the African country Gabon, suspected that their president Ali Bongo was seriously ill or dead in 2018. To debunk the speculation, the government announced that the president had suffered a stroke, but he was in good health. The government soon released a video of Bongo delivering the New Year’s address to the nation. Within a week, the military launched an unsuccessful coup citing the video as a deepfake. It was never established if the video was, in fact, a deepfake, but it would have changed the course of government in Gabon. The idea of deepfakes is enough to accelerate the unraveling of an already precarious situation.

Another concerning example of misuse of deepfake is how it helps fraudsters to make money. In 2019 deepfake voice audios were used to steal millions from various chief executives by tricking operators into transferring large sums of cash. This was done by identifying some weak points in the audio where some words were poorly generated and inserting convincing background noise to mask the flaw and succeed in the attack. A particular instance of such a crime was reported in March 2019, when the CEO of a UK-based energy firm was asked, over the phone, to wire $243,000 to a Hungarian supplier by the CEO of the firm’s German parent company. The British CEO complied. They only realized later that an AI-based software was used to impersonate the German CEO’s voice.

According to Symantec, millions of dollars were stolen from three companies which fell victim to deepfake audio attacks. On each attack, the AI-generated synthetic voice would call the senior financial officer to request an urgent money transfer. This was done by training deepfake models on the CEO’s public speeches.

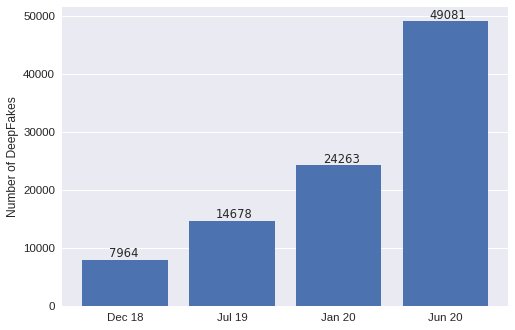

This technology and its rapid evolution and popularity is what we fear the most. The number of fake videos has been doubling every six months since observations started in December 2018 and it will most likely continue to grow in the future.

It is becoming extremely inexpensive and easy to create a deepfake of a person. For example, at deepfakesweb.com a person can create a high-quality deepfake for just $60. Although this platform leaves visible watermarks and contains an intentionally imperfect design to be able to spot that it is a deepfake, alternative open source codes are available without these protections.

How can deepfakes be detected?

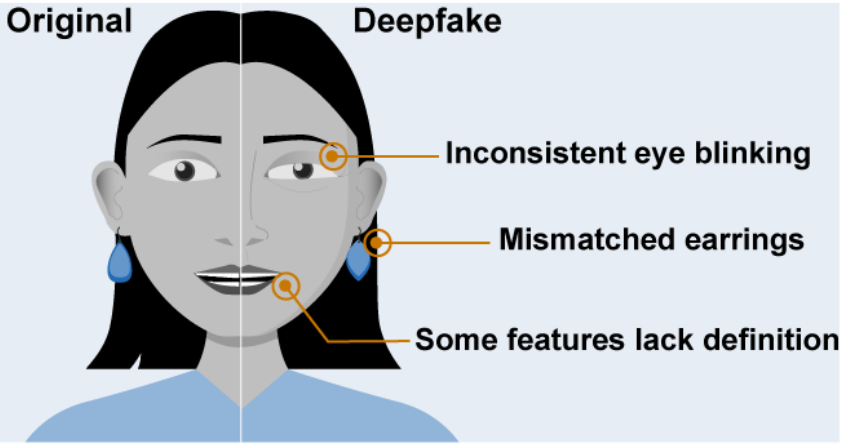

Numerous attempts have been made to detect deepfakes using ‘AI against AI’. While some poor-quality deepfakes are spotted with the human eye, others can be quite difficult to recognize. Compression of images and video, when uploaded over the internet, could be one of the causes that makes detection harder.

For instance, in 2019 different industry leaders (Eg. Facebook, Amazon (AWS), Microsoft) and academic experts (Eg. MIT, University of Oxford, UC Berkeley) created the Deepfake Detection Challenge (DFDC) to speed up the development of new ways to detect AI fake videos. When looking at how AI can help in this battle, various methods came out.

In “In Ictu Oculi: Exposing AI Generated Fake Face Videos by Detecting Eye Blinking ” an algorithm detects deepfakes using unnatural eye movements present in the videos. Blinking is a physiological signal that is not well presented in the synthesized fake videos; therefore, it can be used to distinguish fake from real.

In another scientific paper “Hybrid LSTM and Encoder-Decoder Architecture for Detection of Image Forgeries”, pixel artifacts helped in the detection of deepfakes. Their research included the development of a deep neural network architecture able to identify fake images using special characteristics such as unnatural smoothing and feathering.

A study conducted by researchers of the University of Southern California used a different approach that focused on head and face gestures. It uses neural networks trained on specific features of each individual such as smirking when making a point, nodding of the head, and comparing them with suspected deepfakes to check the authenticity of video content. When testing this method on videos of world leaders, the algorithm was able to detect deepfakes videos with 92% accuracy.

Although the rate of success it’s not at 100%, using AI to detect deepfakes seems to be one of the best solutions so far. This approach could be similar to combat malware in software systems where creating and distributing antivirus programs is key. There is always new malware coming up, but at the same time, new antivirus software is created to defeat them.

Additionally, the general public should become more cautious and change the way we consider everything we see as facts. For example, spreading awareness of the existence of synthetic media, verifying different reliable sources to check the authenticity of information present in any article/video, paying attention to details and glitches if visible to human eyes, etc.

How to protect against deepfakes?

When it comes to laws and regulations around the creation and distribution of deepfakes, most countries have very few or no laws altogether to protect their citizens against this threat. In the U.S., the Deepfakes Accountability Act (passed in 2019), mandated deepfakes to be watermarked for identification. Though this law makes deepfakes identifiable, it does not solve the problem. Just because a video is marked as ‘fake’, does not give the creators the right to use a person’s face without their consent. Also, these videos are often so diffused and have been uploaded over and over again on different websites, that they could still be a threat in the future, eg. violating a person’s consent and privacy.

Furthermore, most people are not even aware of the existence of deepfakes, and due to human nature, we believe everything we see. It is hard to disbelieve something we see with our own eyes, even when it is marked as ‘fake’.

An issue that arises when trying to seek justice for crimes committed using synthetic media is that deepfakes are often protected under the freedom of expression and speech. For example, in the case of U.S. v. Alvarez, a badly divided Supreme Court held that the First Amendment prohibits the government from regulating speech simply because it is a lie. This makes it extremely difficult for victims to receive justice. New laws and regulations are necessary to protect against the misuse of deepfakes.

States like California and Virginia in the U.S. have revised their laws banning nonconsensual pornography including deepfakes and have also banned the circulation of deepfakes around the election period. These bills also allow victims to sue the people/platforms responsible; they can receive compensation for legal fees and remuneration for any distress caused due to their privacy being violated.

Meanwhile, China attempts to ban deepfakes and other similar technologies altogether by holding platforms that support deepfakes accountable and not the creators starting from March 2022. This new policy makes the platforms’ responsibility to ensure nothing false or unapproved is generated by the usage of powerful recommendation algorithms.

Completely banning deepfakes might not be a desirable solution. They can be harmful by spreading manipulated media, but it can also be a powerful tool for expressing humor, dissent, and social critique. Moreover, synthetic media can also be used for accessibility, education, criminal forensics, and artistic expression.

A possible solution to make deepfakes less of a threat and encourage the use of responsible synthetic media might be to make it mandatory to get a ‘license’ to create deepfakes. Governments could make the creation and distribution of deepfakes without a license, a criminal offense. This could be similar to having a license to possess and use guns and firearms.

To conclude

Deepfakes have proven to be a very powerful tool with huge potential in any way it is used. The misuse of this technology could lead to catastrophic results as mentioned above. With such a rapidly evolving technology, humans need to keep up when it comes to detection and protection against it. While syntetic media are becoming more realistic and difficult to spot, we need to keep improving AI detection algorithm.

To reduce damage caused by the exploitation of deepfakes, governments need to impose certain laws and restrictions to avoid misuse of this virtual weapon. While banning deepfakes completely may stunt advancements in AI and it is not a feasible solution, regulations are still highly needed. We propose that in order to create and distribute synthetic media, creators should be required to obtain a license as it is the case with any other dangerous weapon or technology. This along with improvements in detection does not guarantee the elimination of deepfake crimes, but we hope it will reduce them to a large extent and promote responsible use of synthetic media.