February 1, 2022

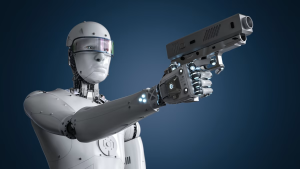

War and violence have caused a lot of misery in the past up till now. Nowadays, killer robots are gaining more and more attention in the media. Most people are against them and organizations such as PAX are committing themselves to illegalize their existence and development. Being against an autonomous machine that is designed to murder people is to be expected, as practically everyone is against murder and war. There are however lots of countries and organizations who are willing to invest lots of money into them. In this article we will shed a light on the positive- and negative sides of killer robots and why we think autonomous systems lead to more conflicts thus more warfare and should therefore be banned. This will be done by emphasizing the dangers of getting hacked, the responsibility gap that killer robots bring, the danger of causing more violence and misuse by terrorists. On the other side we will emphasize that autonomous weapons may be less biased than humans, that they could be more rational, the deterrent effect of an international arms race and the possible decrease in deaths during wars.

Our Future Veterans

The development of killer robots is currently starting to exit the state-of-the-art phase. According to Skynet Today, at least twelve different states are nowadays both developing and deploying these autonomous machines, including all five nations of the United Nations Security Council (also known as the Big Five). This AI arms race involves the development of a variety of weapons, including missiles, drones, cyber-weapons, rifles, tanks and even ships.

In 2020, the United Nations reported the first AI war victim may have fallen in a Libyan military conflict, using a so-called Kargu rotary-wing attack drone. This drone can fly itself to a specific location, choose its targets and autonomously decide to shoot and therefore kill. The U.N. stated the AI-drone debut was made, but does not conclude anything on the result. If killing was the case, then this would be the first time ever a human was eliminated by an artificial intelligent system.

Kargu rotary- wing attack drone (credit: STM)

The ongoing development and deployment of AI in warfare shows the urgency of debate, and reveals the limited amount of time we have to pull the brakes.

Safety First

Cybersecurity is becoming more and more important in today’s society. Sometimes, entire companies need to temporarily shut down or pay ransom due to a cyber-attack. Most of the time those companies were not sufficiently prepared against such an attack, but in some cases, even the most protected companies or systems get hacked. This tells us that no matter the security measures taken, a system can never be 100% safe against cyber-attacks.

We should question ourselves whether we want an autonomous system to decide over our lives, if this autonomous system may be vulnerable to hackers who could be hired by people with bad intentions. In the worst case, someone could for example hack a missile launching system to bomb an entire city. This may sound a bit extreme, but it may never be played down even if the chance is so low.

Radicalization

In the section ‘Safety First’, we discussed the danger of autonomous weapons being controlled by someone with bad intentions after hacking these systems. However, why would someone with bad intentions put all effort into hacking a system if this person could also get such a system for itself? You may think that this is not possible because these systems are only accessible to the people who ‘should’ own them, but history has taught us that illegal arms trade happens all the time and is very hard to mitigate.

An example of the consequences of the illegal arms trade is terrorism. Terrorist organizations are willing to pay a lot of money for weapons and therefore are likely to also be willing to pay a lot for autonomous weapons. Imagine what would happen if terrorists possess autonomous drones. This would greatly reduce the threshold for terroristic attacks. Aside, if terrorists, as well as the army, can utilize those autonomous weapons, then their strenghts match again, which will result in the same situation as we are in right now.

Deaths at Discount

The potential use of killer robots results in a big responsibility challenge. Let’s imagine a scenario where a suicide drone, belonging to ‘Army-A’, is used in warfare to automatically detect enemies from ‘Army-B’, and autonomously decide when to detonate thus kill. Army-A deploys the drone and sits back waiting for any result. The drone flies above enemy grounds and at a certain point detects a group of enemies. It is ‘pretty’ sure these enemies are real enemies and therefore decides to immediately attack. However, later on, it becomes clear these ‘enemies’ were just citizens and the drone accidentally misclassified them. Who to blame? The developers as they developed a non-bulletproof drone? The military as they decided to deploy the drone? The people who bought it? The drone itself?

To immediately make things clear, no one knows yet who is responsible, even legislation itself. This problem is often referred to as the responsibility gap. Robert Sparrow, scientist at the School of Philosophy and Bioethics in Australia, also acknowledges this responsibility gap. He argues that if no one can be held responsible the potential risk arises that everyone gets to treat their enemies as vermin. Aside, Sparrow claims, due to the responsibility gap, killer robots are against International Humanitarian Law, as this law states it must be possible to hold at least someone responsible for taking a life.

If the responsibility gap is not fixed, everyone in this world will be outlawed. This is the result of judges being incapable of making convictions and the undermining risks of the law.

Lowering the Threshold

In an article in The New York Times, Phil Twyford, New Zealand’s disarmament minister, is quoted the following:

“Mass produced killer robots could lower the threshold for war by taking humans out of the kill chain and unleashing machines that could engage a human target without any human at the controls”.

The lowering of this threshold is a result of not being forced to physically send people to a war zone. The risk of losing soldiers in a war may simply be too high relative to the potential reward it may bring. As it is possible to ‘just’ send out some robots, where we don’t have such an emotional relationship with people, it is very easy to press the button. As the threshold diminishes, countries start wars with each other more easily.

Next to that, also the responsibility gap contributes to lowering the threshold, because it is hard to hold someone responsible for the actions an autonomous weapon takes. Therefore, someone with bad intentions can start a war easily as it is unlikely that he will be held responsible.

Apathetic Soldiers

One commonly mentioned argument why killer robots may be advantageous is their superior rational abilities compared to human beings. BBN Times states human warfighters have human instincts which can restrict them in properly making life-death decisions, whereas robots are basically doing whatever it has been programmed to do. This may raise the question of whether the war would be more fair to all parties involved and less ‘unwanted’ events will be happening due to the reduced likelihood of human mistakes.

One thing that, in our opinion, is often overlooked, is the possibility of emotional development in AI. Mo Gawdat, in his book Scary Smart, argues that AI will for sure develop emotions. Gawdat substantiates this by redefining the origin of emotions:

“I would argue that emotions are a form of intelligence in that they are triggered very predictably as a result of logical reasoning, even if that reasoning is sometimes unconscious.”

If there is one thing computers, thus AI, are good at, it must be logical reasoning. Therefore, AI emotions will very likely be far beyond human emotions. Furthermore, as stated in research, irrational behavior arises as a consequence of emotional reactions evoked when faced with difficult decisions. Hence, more emotional capabilities will likely increase potential risks of irrationality. It is therefore questionable whether the rationality argument holds.

Robotic Front-Line

If we will ever get to a point where human soldiers are replaced by robots, this will literally cause a robotic front-line. This could mean that war is being fought by cyber-attacks and robots physically pitching into each other. This would bring a revolution to warfare: no more human deaths. People would not even consider attacking these robots as they don’t stand a chance.

This sounds like a perfect alternative for the current way warfare is being held. However, history has shown us that no matter the treaties defined, they always tend to be violated. Take World War II, where six million Jews were executed during the Holocaust. Why would we expect that this would get better if we replace human soldiers with robots? What do the robots do when they’ve won the battle?

Even if the replacement of human soldiers with robotic ones leads to less deadly victims in a war, this doesn’t mean that the killing stops. In fact, even more fatalities could occur given the aforementioned decline of the threshold. Next to that, nowadays killer robots are not designed to fight against other killer robots, but against people. The future will tell us if this will shift towards robots, but imagine the detrimental impact of warfare with killer robots fighting against humans.

Rush of AI-armaments

For decades, arms races between countries have been going on. Think of the arms race between North- and South Korea, or the one between the U.S. and the Soviet Union during the Cold War. Although enormous amounts of weapons are being developed and deployed, arms races tend to reduce the likelihood of wars. In fact, disarmaments could not only lead to peace but also to war. But does the disarming threat also automatically apply to AI-arms races? We do not think so.

PAX stated in 2019 that the US is investing 2 billion dollars into developing the ‘next wave of AI technologies’, including technologies specifically meant for warfare. This example shows that AI in warfare is taken very seriously, therefore likely to become a greater part of future arms races.

The difference between AI-arms races and traditional arms races is the amount of controllability. For the first time in history, countries are deploying self-controlling weapons, meaning the human-controllability is decreasing. If an autonomous weapon decides to attack without any human interference, during an arms race, then this could very easily escalate and cause a new war. Hence, we conclude that AI-arms races do not decrease risks of war, in fact they increase risks of war.

Summing it all up

In this article we have shown why an immediate ban should be considered regarding killer robots. And we are not alone. Tesla’s frontman Elon Musk, co-founder and former head of applied-AI at DeepMind Mustafa Suleyman and 116 specialists across 26 different countries are calling for the ban on autonomous weapons. They state that “Once the Pandora-box is open, it will be hard to close.”, therefore immediate action is required as we are approaching a point of “third revolution in warfare”.

Unlike the temptation that killer robots bring with them for big powerful countries, they might also be used against them. In fact, as in any other war in history, there are no winners and only sacrifices are made.