The spreading of Artificial Intelligence in a lot of different domains is not news anymore. Intelligent systems, self-learning algorithms, and interconnected technologies have become normal tools in our society, often raising concerns and preoccupations that such systems might one day be able to outsmart humans. However, these problems are not as immediate as other threats that we are facing with the expansion of AI. The laws and international agreements that are in place now need revision and updates in order to keep up with the issues that are rising in many different fields as a result of the implementation of intelligent tools. In the next paragraphs, we are going to examine five main realms in which the need for better regulation appears clear: privacy, inequality, biases, legal responsibility, and developing countries. We then analyze three possible counter-arguments that have been made against legislating AI and try to confute them.

Why do we need newer rules?

Privacy

Privacy is without a doubt one of the major issues in a world where the collection of data has skyrocketed, continuing to increase at a very fast pace also in recent years. The word Big Data is often used to describe such enormous quantities of personal information that are collected every day all around the world and often examined by AI algorithms. Big Data comprise different types of information: from browsing history stored in cookies to fingerprints for accessing smartphones, but also transactions operated with a credit card, surveillance cameras recordings, and many others. AI-powered surveillance systems are spreading quickly, with slightly less than 50% of countries around the world that decided the implement them. These systems, of course, need to live-stream and store recordings, for further reference by law enforcement officials or courts. Also the concept of Smart Cities, a city built around intelligent systems that is capable of self-regulating different aspects of life, is becoming somewhat more and more popular, with the first systems already present in various places around the world. To power such cities, a huge amount of data collection and processing is needed, as well as enough space to store this incredible size of information. Storing data online exposes them to two main threats: privacy breaches and unfair use. Personal data breaches are not at all infrequent and with the increasing amount of Big Data, such disruptions of privacy would lead hackers to gain access to an enormous mole of information about many individuals all over the world. While this might seem only a problem of cybersecurity, it is also a matter of legislation, given that more specific rules on where to store and how to collect data are surely much needed. Concerning unfair use, it is not uncommon that unskilled individuals are deceived and brought to share their personal data by companies providing unclear information about data treatment policies. These two problems clearly raise doubts over the efficacy of current laws regarding privacy, and the increasing advent of AI systems seems to urge in developing newer ones.

Computer scientist Oren Etzioni proposed three possible rules for regulating AI systems in an efficient way. One of them seems to suit very well the scenario just presented, by reciting that explicit consent should be gained from users before collecting and storing their personal data. In Europe, the General Data Protection Regulation of 2016 already tried to tackle these problems, by specifying the subject of explicit consent, but in many other areas of the world this issue is still unresolved. Moreover, also in Europe problems were not tackled completely, since most websites have not implemented fair and usable cookie notices (perhaps not even this one). New legislations are needed for privacy, as well as more control over the actual compliance with them.

A visualization of Big Data and related fields.

Image by Wikimedia Commons (Creative Commons Licence, no modifications, click here for the original version)

Inequality

A second controversial issue is definitely the enormous power that big-tech companies reached in the past decades. Apple, Google, Amazon, Microsoft, and Facebook have grown exponentially and now more than never they register incredible turnover grinding millions as insatiable beasts. However, everything comes at a cost, which is the killing of small businesses through automation and monopoly. For instance, one may think that substituting humans with robots involves only future generations, rather than ours, but such belief is inappropriate. In fact, Google has already released fully self-driving cars, and other companies such as Tesla or BMW will do the same in the next few years. Will we need taxis or truck drivers in the next five years? The evidence suggests that soon automation might make them unnecessary. Furthermore, the Coronavirus pandemic that challenged the past year has revealed how big-techs do not suffer crises. Indeed, Amazon, by using AI in many fields, such as warehouse automation and product suggestion, has gained the monopoly of online sales, while already replacing human labor with robots for several tasks. It is also known that thanks to big-techs’ expansion, new work opportunities are rising. Unluckily, according to many researchers these newly created jobs require highly skilled labour, that may be inapproachable on both an educational and economic level, as in the case of taxis or truck drivers. A possible solution to avoid excessive concentration of power among the very few companies that rule the markets could be a robot tax. This solution could ease unemployment and inequality within our society in two ways. Firstly, this tax could monetarily help all the people who have recently lost their jobs because of automation. Secondly, it could be invested to give free learning courses to people that wish to change their lives, acquiring the skills needed to keep pace with automation.

Biases

Machines and AI have affected people’s lives in a variety of manners. In fact, in some cases biased AI resulted in injustices. An example which caused a lot of stir occured in October 2019, when Obermayer, who studied machine learning and health-care management at the University of California, demonstrated how an AI algorithm, used in the American healthcare system to calculate the patient’s risk level, was racist because of bias. In fact, the algorithm tended to assign a lower risk score to black people than to white people in equally bad conditions. A further important case involved Amazon and its artificial recruitment algorithm in 2015. The company declared that it was working on an AI system capable of searching the best candidates looking through thousands of resumes. Unluckily, this system has been trained with the resumes received by the company for over ten years, many of which came from men, and this led the AI to prefer males over females. Cases like this are becoming more common thanks to the incredible evolution that AI has had in these years. Scholars and researchers have tried to study and identify the most common errors that cause biases in artificial intelligence, although a perfect solution is not easy to determine, there are several best practices to follow that ensure to reduce but not eliminate biases. Since the problem is not to be overlooked, regulations regarding AI and biases should be created. A possible solution could be obligating companies that create AI systems to test their software from an external company before going into production. International agreements should regulate this company, and it should implement all the best practices found so far to fight biases. With this last test, performed by people outside the project, excellent results may be obtained.

Responsibility

Furthermore, as the years go by and the increase of the AI systems on the market, we’re realizing that AI is not perfect. Biases and not fully developed training are just some of the causes. These problems are really hard to find, and sometimes they may result in a catastrophe. A famous example occurred in March 2018, when an Uber self-driving car killed a woman that was crossing the road with her bicycle. After investigation, the police showed that two fundamental elements caused this tragedy: first, the back-up driver Rafaela Vasquez was using the smartphone instead of monitoring the car. Second, Uber’s sensors detected the victim six seconds before the impact, but they tagged the human body a false positive. In this case, the fault was on both sides, because the back-up driver had to watch the street, and the AI self-driving algorithm developed by the company made a mistake. However, the judge only found Rafaela Volquez guilty. Is it a fair judgment? Suppose a few years from now, there will be a fully automatic car that will kill a person accidentally, who will be responsible? Unfortunately, at the moment, it is difficult to give an answer. The laws regarding who is guilty of errors made from AI are not clear. Within a company, it’s hard to decide who is exactly at fault. The journal state.com states “But in criminal law, there is no real corollary to the doctrine of vicarious liability. Corporations cannot go to jail. And it is often difficult to identify a single culpable actor in these cases even when a criminal act has been committed. So when prosecutors need a target in the name of public accountability, it is the ”servants” who often pay the price.” Hence, new regulations could be a solution to this problem that surely needs to be addressed as soon as possible.

Sketched Representation of a Self-Driving Car system.

Image by Wikimedia Commons (Creative Commons Licence, no modifications, click here for the original version).

Developing Countries

Lastly, implementing newer regulations for Artificial Intelligence might be of great benefit for people living in developing countries. AI is a tool that proved to be useful in a lot of different fields, such as diagnosis of diseases (both for humans and for crops in agriculture), security systems, and farming. In addition, also the implementation of Smart Cities might have a big impact on the improvement of quality of life in such countries, by dealing with urbanization, waste management, and effective transportation. All this, of course, cannot happen without proper investments made by big companies and wealthier nations. It would be therefore beneficial to include in new regulations, developed for AI and technology, specific incentives for investing in developing countries, in a somewhat similar fashion as the previously-mentioned robot tax. Actively asking companies and governments to invest a part of their money into projects that would improve the economy and safety of poor countries certainly seems like a good idea. Naturally, transnational regulations and international agreements would be needed, in order not to transform such lands into big companies and governments’ testing sites for technology, while also ensuring fair and equal treatment with respect to all the issues presented in the previous sections. This last point suggests the need to develop an international body in charge of regulating and overseeing AI, a concept that is projected to help maintain AI regulations fair and shared across countries, ensuring an even development of the technology.

On the other hand…

People’s Responsibility

When it comes to AI regulations, there are always opposing views. Bill Gates and Elon Musk are concerned about the problem and mentioned that we must be cautious with the evolution of AI. Specifically, Musk said: “AI is the rare case where I think we need to be proactive in regulation instead of reactive. Because I think by the time we are reactive in AI regulation, it’ll be too late,”. As many know, the world overflows with people who tend to prioritize their interests such as financial benefits and power. Let these people develop AI without any regulations, may end up with a catastrophic end. However, some industries’ leaders do not see it in the same way. One of them is Facebook CEO Mark Zuckerberg. Indeed, he is optimistic about AI and its future. In a Facebook live he said that in the next five to ten years AI will deliver many improvements in the quality of our life. Moreover, he did not hide opinion saying: “Whenever I hear people saying AI is going to hurt people in the future, I think yeah, you know, technology can generally always be used for good and bad, and you need to be careful about how you build it and you need to be careful about what you build and how it is going to be used,”, but did not specify the need for any regulations. Could it be correct to think that humans are responsible enough to develop AI that acts for humanity’s common good? However, the paper Cooperation and Group Size in the N-Person Prisoners’ Dilemma seems to be in conflict with this idea. In fact, it shows that larger groups will be more likely to contain inherent noncooperative persons. Considering population growth on the earth, in the next few years it is expected an increase in people acting for their own benefit. In conclusion, developers and companies do not always act for the common good, and in the future the situation may worsen. Therefore regulations are needed.

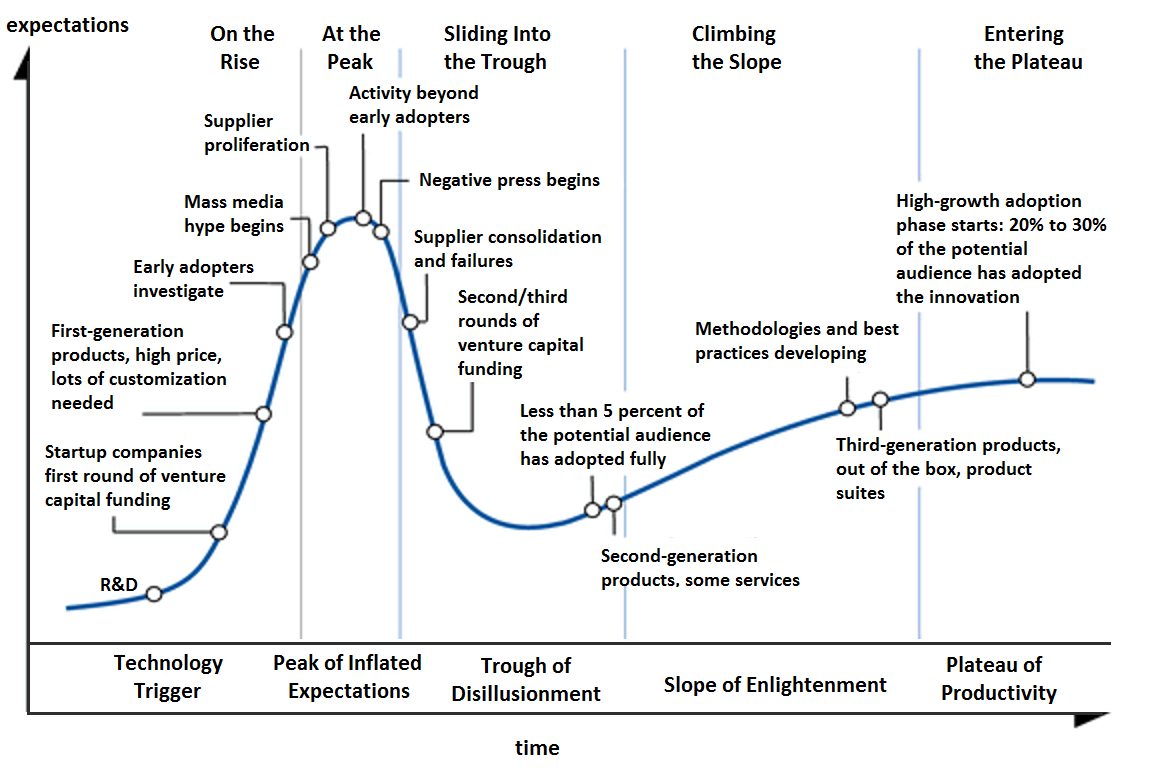

Harm AI Potential

In addition to that, if one looks back at the early years of the internet and compares it to what we see nowadays, it appears that the relative lack of strict regulations that it received allowed it to become what we know now. A powerful and somewhat essential tool that is now capable of connecting billions of people around the world, powering the effective and immediate exchange of information, while also allowing to manage complex systems such as railways and airports. The question of whether the regulation of AI will somehow endanger its potential for the future of humanity rises inevitably. Over the course of history, people have always been skeptical (and in some cases also very pessimistic) about the newest technological advances, but in most cases, such technologies have grown so much and have become deeply intertwined with our existence that it would appear problematic not to have them in modern times. Artificial Intelligence is without a doubt one of the most promising fields, and it could appear wrong to excessively limit its potential with laws and regulations that would impair the possible future applications of it. In fact, however, researchers at Gartner have developed the concept of “Hype Cycle”, showing that for most technologies the development follows a somewhat similar path, with inflated expectations at the beginning and much modest actual developments in the end (see figure below). In light of this finding, it does not appear too harmful to regulate AI, given that most of the current expectations will not very likely find the light in the next decades. On top of that, regulating AI will not necessarily mean harming its potential or ruining the future of it, but simply tracing appropriate boundaries to avoid this technology doing more damage than good.

General Gartner Research’s Hype Cycle Diagram.

Image by Olga Tarkovskiy (Creative Commons Licence, no modifications, click here for the original version).

Difficulties in Regulating

One last argument against the proposition of new legislation is that Artificial Intelligence is difficult to regulate because it is a broad ensemble of techniques and models. Chief Privacy Officer Andrew Burt defended this position in an article in the New York Times, stating that AI is composed of a lot of different applications. He argued that regulations, in order to effectively tackle possible problems, should only be implemented to solve specific issues and not take the form of broad legislation. The fact that AI is a broad field, composed of different techniques and technology, cannot be denied. However, this fragmentation should not prevent institutions from recognizing the problems present in the realm of Artificial Intelligence and try to solve them. New regulations should not take the form a general law for AI, but recognize the specific issues that are hidden in the different techniques, while taking the approach of a national regulation. Moreover, Artificial Intelligence should not be treated as a separate technology, as it is a field that is deeply intertwined with many other disciplines. The focus should not be just to regulate AI, but to develop new policies that take into account the whole world of technology, while not overlooking the small issues present in every corner. In order to effectively do so, there needs to be a collaborative effort between nations, as already stated previously. The need for agreements and uniformity of rules among most countries in the world has been highlighted also by experts in the field, as this would ensure a uniform and steady development of the same type of technologies.

In a nutshell

The need for newer laws and regulations in Artificial Intelligence is much needed, especially as a result of issues that keep on arising recently. Topics like privacy, biased algorithms, and responsibility have already received a lot of attention, but more work is required by governments and international bodies to develop effective legislation on the matter. Problems of inequality and the need to help developing countries by providing intelligent systems have also surfaced in recent years. While studying and implement new rules for all these issues is paramount, we also argued that there needs to be more active control on whether such laws are effectively implemented.