We humans are in danger. Looking at issues such as climate change and non-renewable resource shortages, it is evident that our way of living is unsustainable as it is. Furthermore, we could be facing threats not just from outside, but also from the inside. With the rapid advancement of AI, the popular prophecy of robots taking over humanity could become reality. How can we face all these problems ? The solution : stepping over our boundaries and become transcendent.

Why will humanity perish ?

Superintelligence

Currently it might seem very premature to talk about the widely dramatized scenario of AI takeover since AI is currently mostly present at the stage of narrow AI. In effect, Siri, Cortana, Alexa and Google Assistant are all good illustrations of narrow AI. Indeed, “Narrow AI is a specific type of AI in which a learning algorithm is designed to perform a single task and any knowledge gained from performing that task will not automatically be applied to other tasks“. This means that when you ask Google Assistant to turn off your smart lights, the AI behind it does not infer anything else (the time, what you asked before and after, the words you used, the syntax, the tone). It simply uses speech recognition to extract the semantics of the speech then speech synthesis and then performs the task. This is unlike GAI (General Artificial Intelligence), which aims to mimic humans complex thought processes.

Therefore, AI has a still a long way to go to reach what we call “strong AI” or sometimes AGI which stands for “Artificial General Intelligence” but this does not mean that we should not anticipate what could happen. Indeed, progress is often times quite slow and progressive in science but very sudden once it is implemented in the society and we have a hard time evaluating its impact. Estimating its side effects, collateral or indirect damage etc.

Essentially once a problem or a need is resolved, it seems to be unavoidable that other problems will surface from the very solution of the initial problem and those are overlooked by scientists. This has happened many times before in our history. We have seen that less than two decades ago with the democratization of smartphones. Notably, the debate on the mental as well as physical consequences that smartphones could have has risen only years after its appearance on the market. For instance, there is debate whether the wavelengths are damageable to some of our organs, the consequences of smartphone use on the brain (particularly for teenagers).

We still are now at the state of narrow AI, the advances in AI (natural language processing, computer vision, speech recognition) are built for very specific or constrained applications not for a dynamic environment. Thus, we want to develop a certain “common sense” but it is difficult to know the limits of “common sense”. For a specific situation, there are so many scenarios that could play out and our reactions to it are often hard to explicate. The human “common sense” comes from conscious experiences, so if we manage to create consciousness, AI will be able to respond better to never-encountered before and unexpected situations.

Taking over humanity

When people ask this question, they seem to have a certain image of AI in mind, they see AI as a black box, functioning on its own beyond human invention or escaping human control; maybe creating a consciousness (self-awareness, awareness of its environment), even emotions.

It has been the subject of many science-fiction novels, movies and television shows; AI might gain independence from their genitors and even, why not, threaten them and come for revenge and replace humans as world rulers. If we go further, Artificial intelligence can be assimilated to an all knowing God in these anticipations. This plotline is also more and more present in the public space through non fiction content (newspapers, various seminars, talks, documentaries, scientific literature and papers…), which contributes to make it seem more serious.

It seems clear that even if the public might not really be interested in the topic, the idea that AI is a threat to society seems to be evoked almost everytime someone mentions AI. Indeed, AI’s dystopian view has invaded the common subconscious of our era.

According to Nick Bostrom, who is not an AI researcher but a philosopher, superintelligence is a real threat to human society. He considers it to be an existential risk which makes it part of “risks that threaten the destruction of humanity’s long-term potential.”

The hypothesis made is that an “intelligence explosion” will happen if our technology keeps improving at the same rate which will result in what we call a technological singularity. That is to say, a hypothetical point in time at which technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization. In short order, a point of no return for human civilization.

Existential risks are a sub-class of global catastrophic risks, where the damage is not only global, but also terminal and permanent (preventing recovery and thus impacting both the current and all subsequent generations).

Natural threats

Climate Change

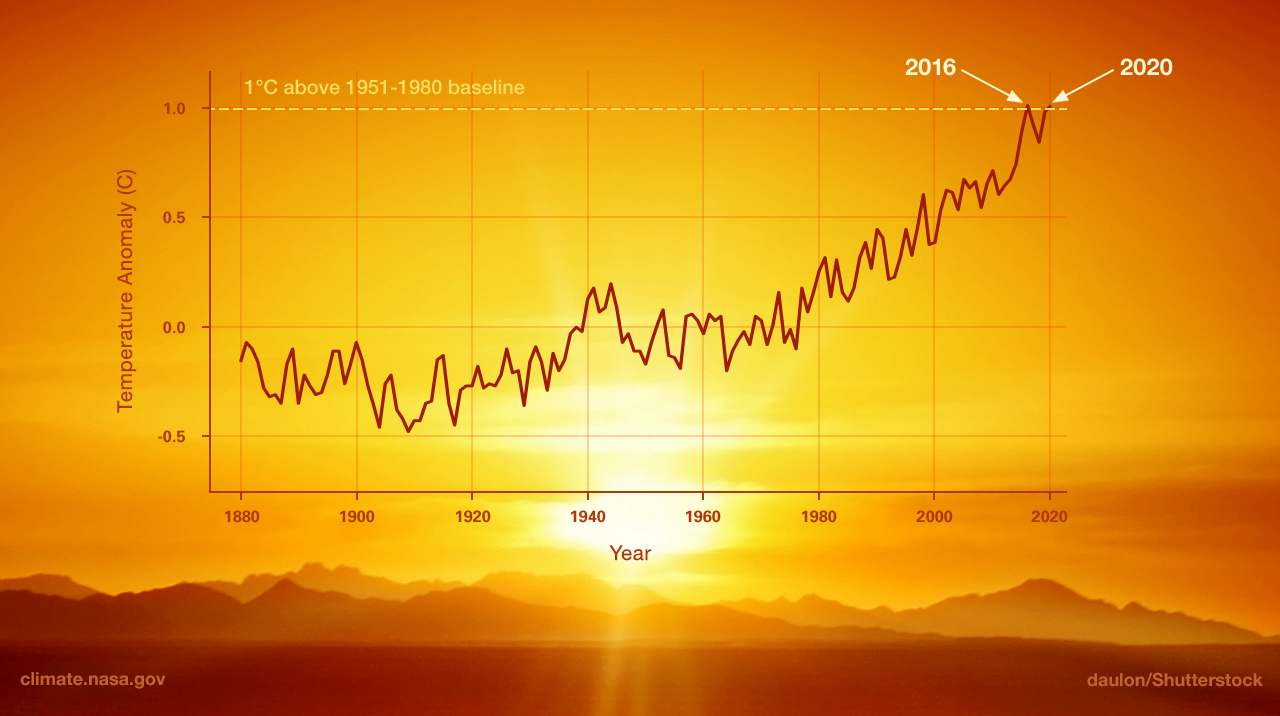

With the industrial revolution our modern age has brought a new kind of problem which became evident in the 1980’s, and that is climate change. Even though it is not an imminent threat, we can already see its effects on our world. It is often used interchanged with global warming, although the two terms are slightly different. The cause of this phenomenon is the so called “greenhouse effect” and we are almost the sole contributors to it due to the burning of fossil fuels. We may not see it now but what are the long term effects?

- Melting ice

A 2016 study found that there is a 99% chance that global warming has caused the recent retreat of glaciers and sea ice. In 2020, summer sea ice hit the second-lowest extent ever recorded and some scientists think the Arctic Ocean will see ice-free summers within 20 or 30 years. - Heating up

Many already-dry areas are expected to get even drier as the world warms. A study predicted an 85% chance of droughts lasting at least 35 years in western North America by 2100. Droughts, in turn, can set the stage for devastating wildfires. A 2014 research found that many areas will likely see less rainfall as the climate warms with subtropical regions being the largest sufferers. - Extreme weather

Hurricanes and typhoons are expected to become more intense as the planet warms. That means more wind and water damage on vulnerable coastlines. Paradoxically, climate change may also cause more frequent extreme snowstorms. - Ocean disruption

When carbon dioxide from the polluted air reacts with seawater, the pH of the water declines (that is, it becomes more acidic), a process known as ocean acidification. This increased acidity eats away at the calcium carbonate shells and skeletons that many ocean organisms depend on for survival. These creatures include shellfish, pteropods and corals.

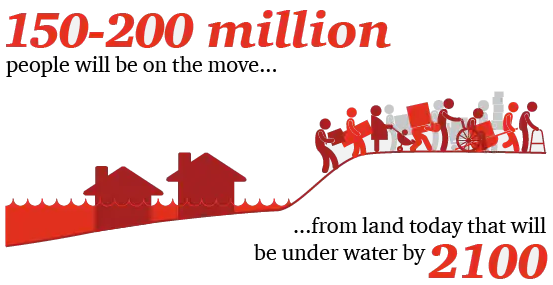

- Rising sea levels

The current estimate is that the sea levels will rise approx. by 2 meters by 2100. Warming the planet by 1.5 degrees suggests an estimate of six to seven meters increase by 2500. That would see a huge proportion of the eastern seaboard of China, for example, underwater. The impact is already being felt in low-lying coastal cities.

Resource Scarcity

Another problem that closely ties in is the shortage of our resources. While scientific debate continues, we believe the fundamental analysis of the challenge facing the planet is correct: the planet is unable to support current models of production and consumption. A growing global population is expected to demand 35% more food by 2030. The interconnectivity between trends in climate change and resource scarcity is amplifying the impact: climate change could reduce agricultural productivity. In short, the world’s current economic model is pushing beyond the limits of the planet’s ability to cope.

Solution : Transhumanism

Transhumanism is a class of philosophies of life that seek the continuation and acceleration of the evolution of intelligent life beyond its currently human form and human limitations by means of science and technology, guided by life-promoting principles and values.

Max More (1990)

What really is transhumanism ? Transhumanism is expanding human intellect (and even body) through technology. The idea is not new and we can already see real life practices, such as prostethics, hearing aids and even false teeth. Why not go further? In the future we could use implants to see and hear things we couldn’t before, or boost our cognitive processes via memory chips and at the end of the line, we could merge with machines completely.

If no such crisis come along in the near future, which is possible, we do not have to conclude that we transhumanism is useless. In fact, transhumanism could very well help us with an array of issues. Even the relatively strongest humans on the planet physically and mentally are still very fragile. There is no doubt that for certain problems we would benefit from transhumanism. Indeed, the human body and mind is very fragile and has a very subtle balance. Technology could help us with the frailties of the human body. Failing organs would be replaced by longer-lasting high-tech versions just as carbon-fibre blades could replace the flesh, blood and bone of natural limbs. Thus we would end humanity’s reliance on “our frail version 1.0 human bodies into a far more durable and capable 2.0 counterpart”. As for transhumanists, the singularity is interpreted in a favorable light. The singularity for transhumanists is thought as the point at which AI surpasses that of humanity, which will allow the convergence of human and machine consciousness. That convergence will lead us to greatly improve our generally miserable lives with the increase in human consciousness, physical strength, emotional well-being, and overall health and greatly extend the length of human lifetimes.

Transhumanist technology will do much for the world that the world can’t really imagine yet, including overcome some of the climate issues the world is facing.

Zoltan Istvan

Often times, transhumanism and posthumanism are confused with each other but these are different concepts. While posthumanism reconsiders what it means to be human with the advance of technology in our lives, transhumanism actively promotes human enhancement.

It will come as little surprise that the man who wants to make man a “multi-planet specie” is also in favor of transhumanism. Elon Musk argues that to be able to compete with AI, we have to be enhanced with chips (this is the main idea around which Musk’s Neuralink is based). Technology can help us treat diseases as well as push our current limitations. For example, athletes are expected to run faster with carbon-fibre blades than with organic legs in a few years. We could also connect our brains to technology to improve our memory, mood control, attention-span, mental diseases (Alzheimer, schizophrenia etc.). The brain still remains a mystery to scientists to this day, maybe the way to pierce through the smoke screen is nanotechnology and cybernetics.

There are 3 mains approaches that are distinguished in this paper which are useful to understand what the role of strong AI can possibly be in tomorrow’s society.

There is the technology-centric perspective that claims that humans are flawed and biased, and that our own creations are substantially “better” than us to make smart decisions. This approach is the one that could potentially lead us to an AI takeover if we don’t make sure that our creations remain in our control.

There is the hybrid intelligence one, which is the closest to transhumanism. This is a human-centric perspective, it claims that we are complementary with AI. AI can only ever exhibit part of human cognition in this model.

There is the collective-intelligence one which we haven’t discussed here yet. Its proponents essentially suggest that communication, collaboration between AI systems and humans is the best way to go. Therefore, this would mean that we need to optimize the human-computer interaction as much as possible.

Pros & cons of alternatives and concerns

Every extreme idea such as this brings a natural amount of skepticism with it. There are a number of questions that come to mind. If we keep augmenting ourselves how much of a human we remain? Or are we still going to be humans? Is it safe? For the first two questions, futurist Ray Kurzweil has a definitive answer: “There will be no distinction between human and machine or between physical and virtual reality. If you wonder what will remain unequivocally human in such a world, it’s simply this quality: ours is the species that inherently seeks to extend its physical and mental reach beyond current limitations.”

The third question is the toughest one. Wondering if this will be safe is just the same as asking if a gun or a knife is safe. The answer is the same for all of them, it depends. Since this is a tool, how safe it is is in the hands of the user, in other words, us. There are recent researches into how we can “hack” the brain. While it haven’t yielded life-changing results yet, the possibility of curing disorders and other hindrances is there. History has shown us that with every technology we try to improve our quality of life and I believe transhumanism is no exception.

Are there any alternatives we can utilize without AI getting out of hand? Currently we are already using AI to help us fight climate change, but there is no evidence that it is enough help to overcome our problems.

On another note, while limiting the evolution of AI at this point is possible, but would we really go against our nature? Pushing the boundaries of our existence has been the staple of our species and so far we have seen that any technological advancement’s benefits far outweighed it’s risks.

Conclusion

It remains arguable whether becoming transhuman is a desirable outcome at all. It is for sure that we should consider both outcomes, the utopia and the dystopia, in order to have the best possible outcome. Thinking about those matters in advance allows us to minimize the risks and optimize our prospective shift to transhumanity.

One thing we have understood through this article is that transhumanism makes the hypothesis that AI will turn into a dominant fearsome ASI (Artificial Super Intelligence) in the future and that instead of making AI a separate system, we could integrate it to ours. Have we not done this before ? With smartphones, which some argue are extensions of our hands, artificial hearts or mechanical ventilators ? Is this incomparable ? Maybe the real difference would be to have a mechanical ventilator not just when we have a severe case of COVID19 but at all times. That is, upgrading ourselves rather than only curing ourselves.