“Now I am become death, the destroyer of worlds”, one of the most famous quotes that became well-known to most audiences last year through the movie Oppenheimer. The architect of the atomic bomb gained a different vision of his design after he gained insight into the incalculable damage it could do in which the world will never be the same again. Anyone who has watched the development of Artificial Intelligence (AI) in recent years can see that a new “Oppenheimer Moment” is born. While AI was initially embraced for its skill and limitless scalability, beneath this rosy development also lies a negative side. This negative side, which is often overlooked, highlights the negative aspects that AI has on the climate crisis. After reading this op-ed, it will become clear that the negative aspects do not outweigh the gains, leaving us with regret for the AI-driven developments in the climate crisis.

AI’s Massive Energy Consumption

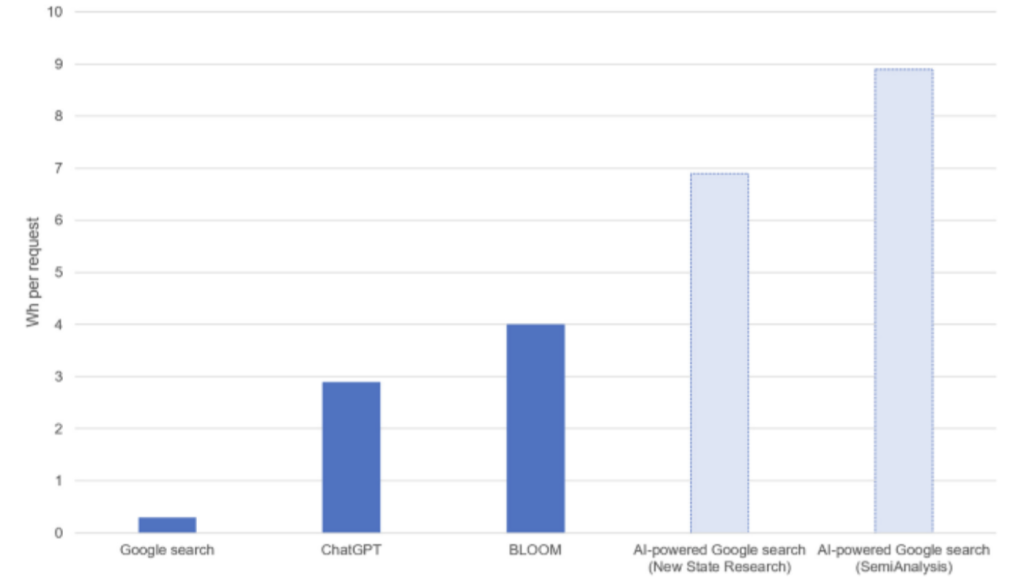

A prominent disadvantage of AI that has long been ignored is its enormous energy consumption. However, generative AI has risen in popularity greatly, and so has the energy consumption. AI companies use large supercomputers to train their language models for chatbots. A supercomputer could run for several weeks to train a single chatbot. Estimates indicate that OpenAI used 1287 MWh of energy for the training of the now older model GPT-3. This is equivalent to 502 tons of CO2 emissions or a person flying from Amsterdam to Istanbul and back 630 times. Some people might argue that there fly thousands of planes every day and the one time investment benefits millions for a long time. We can’t deny both of these claims, however, ChatGPT is not the only chatbot on the market. Technology companies such as Google, Microsoft and Meta are all eager to join this new trend. The one new language model is even bigger than the other, driving energy consumption up. Besides training, because of the millions of users everyday, inference demands enormous amounts of energy. It is estimated that a single Chat-GPT query costs 10x as much energy as a Google search query. To operate Chat GPT, OpenAI consumes about 564 MWh per day, equivalent to the energy consumption of almost 75 thousand Dutch households per day. Scientists warn that AI could soon reach the same energy consumption as an entire country. Unfortunately, we cannot make AI suddenly much more efficient. Although improvements in technology could prove useful, LLMs are only able to learn well when scraping through millions of examples. This makes a large power consumption an inherent property of AI.

The Forgotten Side Effect Of E-waste

Besides the enormous energy consumption of AI, there are other, often less discussed consequences of AI on climate change. One of the most overlooked aspects is that building the hardware required for AI comes at the expense of the scarce resources available. An additional problem arises that by developing and producing this hardware, old hardware being replaced creates a mountain of electronic waste, or better known as e-waste. The common response to this issue is that people believe e-waste can still be properly recycled. However, what is often overlooked as well, are the consequences and risks involved. Through this recycling, small percentages of copper, iron, silver and nickel are extracted and then sold. Because this process is often too expensive for developed countries and the profits are too low, this task is outsourced to developing countries such as India and Pakistan. Extracting these money-making metals releases heavy metals such as lead, cadmium and chromium. The exposure to these heavy metals which pose health risks and the associated emissions that thereby contribute to the climate crisis should ask itself whether this recycling is worth the potential harm.

The Impact Of Data Centers On Our Ecosystem

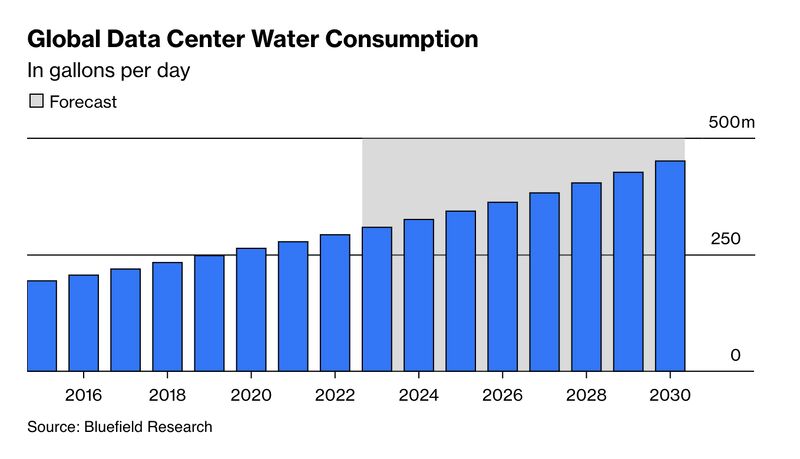

All of this hardware needs to be stored somewhere and this is where data centers come in. AI algorithms are almost always computed in “the cloud”. For the user, this sounds airy, but ironically, reality could not be more different. The cloud runs on thousands of data centers jam packed with electronics using large amounts of energy and creating even more heat. In order to cool the computers inside, data centers almost always use water. In the US, 1.8 billion liters of water is used everyday. This can cause several problems for local ecosystems, such as water scarcity. It might disrupt natural flow of rivers or reduce natural availability for agriculture and drinking water. Furthermore, it often causes severe damage to the aquatic ecosystem in the environment.

The Little Chance Of The Opponent

To give the other side a little chance anyway, we looked at the optimistic perspective of those who believe that the climate crisis can be solved with AI. Soon you land on articles with use cases of AI tools helping to solve the climate crisis. All sorts of solutions have been developed, such as optimizing agriculture, supply chains and energy grids. Some argue that these technologies will help us solve the climate crisis. We can all agree that the savings in terms of resources sound promising. However, the cost to develop these kinds of technologies is often overlooked. In a lot of cases, the profits are marginal and often outweighed by the development costs. It is easy to join the hype around AI and rightly so, it is capable of achieving incredible things, but we should not become blind to its drawbacks. A paper by A. Ligozat takes a critical look at the environmental impact of new AI applications and finds that almost all of them lack a good environmental evaluation. She finds that there are several orders of impacts when deploying a new AI application. The direct impacts are obvious, development cost and running costs, and thus often considered. Nonetheless, there are always indirect impacts, such as societal changes, which are almost always overlooked. Furthermore, some of the solutions induce the use of even more AI systems, driving up energy consumption. An example of this, often used by opponents of our statement, is the potential positive impact on mitigating the climate crisis by replacing emitting passenger cars with electric self-driving cars, highlighting the convenience and benefits associated with this transition. But what is overlooked are the arguments given in this op-ed that illustrate the negative impact of these AI driven mechanisms on the climate. The conveniences of the AI-driven technology in the self-driving car are causing a shift from the low-carbon options (such as the train) to the car which ultimately, without the awareness of the user, reinforce the climate crisis.

Conclusion

It can be concluded that the negative aspects of AI on the climate are a reinforcement of the climate crisis which continues to worsen our environment. After reading this op-ed, it has become clear that AI’s high energy consumption is affecting the climate and thereby negatively reinforcing the climate crisis. Additionally, the processing of e-waste causes the release of toxic metals into the atmosphere and thereby worsening the health of people in developing countries. Furthermore, the increased demand for AI has brought to the light that the data centers, requiring substantial amounts of cooling water, are responsible for the deterioration of our ecosystem and the culprit of drought. Finally, some people argue that new developments in AI could help to save energy and resources. The small chance given to the opposing side in this op-ed is ultimately undercut by the arguments given for this proposition. This also concluded that there is a lack of evaluation and unawareness of the consequences. This draws the conclusion that the costs are not outweighed by the returns.

So without a doubt, an Oppenheimer moment is born. And as Oppenheimer himself says, “No man should escape our universities without knowing how little he knows” which describes that people are far too unaware of the negative effects of AI on the climate crisis. This will develop over the years after which the real regret begins.