Electricity, telephones, personal computers and the internet are only a few of our inventions that have changed the entire trajectory of humanity. These kinds of major innovations alter life as we know it and throw our normal order of things into chaos. One of these major technological breakthroughs is on the horizon. Are we ready for it?

The Singularity is the point in time at which artificial intelligence exceeds human intelligence. It is the point at which the technology we create grows beyond the capacity of human understanding, and reaching this point will create uncontrollable and irreversible growth. The arrival of such a point is dependent on ‘Artificial General Intelligence’ (AGI), or an intelligent system capable of solving different problems across a variety of domains. This AGI could do all the things we humans can, and more. It would have the power to make an enormous difference in the world, and with new innovations in AI, it feels closer to reality than fiction.

From AI based websites that personally coach you for your next big presentation to sites that create stock footage or personalised videos about whatever you want, we already seem to be living in a world where technology can perform tasks that used to be reliant on human skills. However, pinning down a timeline for the Singularity is difficult and something even most experts disagree on. One problem has been the lack of good metrics to measure technological progress. While hardware improvements can be measured in the reduction of performance speeds or more transistors fitting in an integrated circuit, complex AI progress is more difficult to pin down. It depends on hardware and comprehensive data and savvy algorithms.

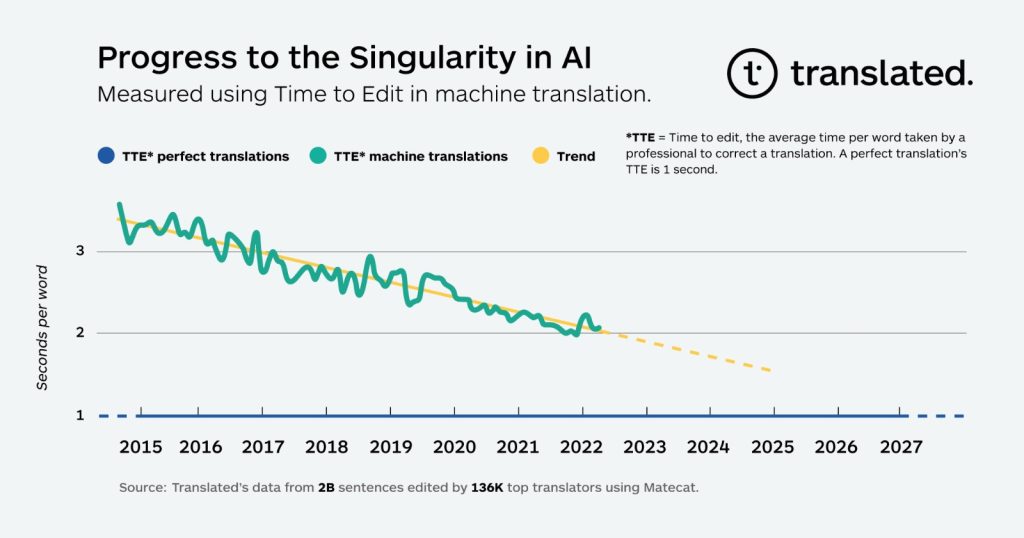

One translation company, Translated, created a metric to predict when the Singularity could be achieved in the field of machine translation. With a field like translation, it is difficult to ensure quality from some automated measure, so the company measures human cognitive effort instead. This metric, called ‘Time To Edit’ (TTE), measures the time per word a professional translator would have to spend on fixing text translated by a machine or other translator. Within the last five years the TTE per word of their machine translations has dropped from 3 to 2 seconds, following a linear trend. With larger data sets, and improvements in language models, it is likely that this score will drop to 1 second and match that of a professional translator. This point of Singularity is likely achievable within the next five to ten years.

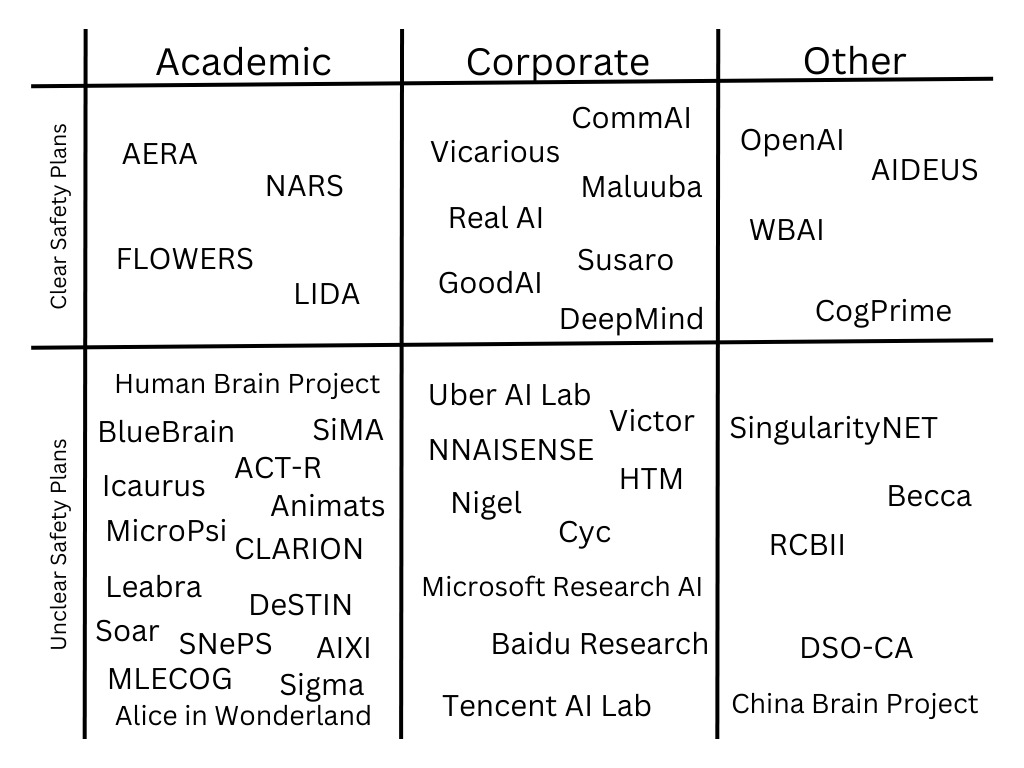

Many public and private entities around the world have been working on projects regarding Artificial General Intelligence and the Singularity. A 2020 study identified 45 active projects including big names like USA based OpenAI, UK based DeepMind and Switzerland based Human Brain Project. All the projects have different stated priorities and affiliations, but it is clear to see an interest from major organisations in such research.

As we can see from examples like the Manhattan project and Apollo mission, the difference between a technology being some vague science fiction idea and reality is often one big financial push. And that push might be around the corner. Recently Microsoft confirmed plans to invest 10 billion dollars in OpenAI, and promising results are likely to get even more investors interested in the Singularity.

The moment on which the Singularity happens, it will go into the history books as the most influential event ever. It can however be a glorious or a disastrous event. The advancements of AI have been, are and will be anyone’s best guess, but the fact is that there is a chance that AI will destroy civilization as we know it and that’s something you want to be prepared for. Opposing this view is Ray Kurzweil in his books he wrote in the 00’s. He believes that the Singularity will not just be a computational revolution, but also a biological one. He sees this not just as the ultimate awakening of AGI, but also as the merger between man and machine.

Although Kurzweil has written his books a long time ago, Elon Musk and one of his many companies Neuralink are proving him right as they are soon starting with human trials of the microchip brain implant, with the initial goals of treating spinal cord injuries and blindness. These are great objectives and support Kurzweil’s utopian view of the post-Singularity world. There is however criticism. One of the objections is that Kurzweil’s view is too idealistic and lacks a sense of reality about the possible implications of the Singularity. After all, it only takes one evil killer robot to do a whole lot of damage.

Although killer robots are a scary outlook, AI researcher Watson is convinced that it should be the supermoral robots that deserve the wariness. The scientist claims that immoral humans have always been lone wolves and he expects the same for robots. However, he believes that the supermoral agent will do anything in his power to spread his ‘ethical message’ to other robots, which will lead to an army of like-minded robots that all think to be carrying out a noble task. An example of this could be a group of robots that get the assignment to urgently solve climate change. Since they’re superintelligent, they might find that the most effective way to accomplish that goal is to kill (a part of) the human population.

This shows that there’s more to the Singularity than just good and evil. There’s an extremely thin line and even after you have defined that line, the means don’t always justify the end. And even if you think you ruled out all possible evil, to paraphrase Vincent C. Müller, the object we’re studying is far more intelligent than us, which seems to imply that even if we were to know a lot about it, its ways must ultimately remain unfathomable and uncontrollable to us humans.

The Singularity is going to change the way we know the world entirely. In what ways, we don’t know. It could be the start of the greatest society we have ever lived in, in which most of the world’s problems we know today are solved. Poverty doesn’t exist anymore, cancer is cured and the world runs on sustainable energy coming from solar panels and wind farms. It could however also be the end of modern civilization and the start of a dark era in which the all-superior AI rules us all in a Matrix-like style.

Despite whether you think humanity will be saved by AI or that we will need Morpheus and Neo to save us from the AI, fact is, AI is already having a profound impact on our world and its influence is only going to grow. How fast this growth will be remains one of the big questions, with the all encompassing one being when the Singularity will come to pass. Given all of these uncertainties, it is critical to take a responsible approach to the development of AI systems. This means taking into account not only its technical and economical possibilities, but also its social, political and ecological consequences. If all of us, with all of these aspects in the back of our heads, try to make the right decisions every time we are about to let a new one of our inventions into the world, how bad can it really get?