“Any sufficiently advanced technology is indistinguishable from magic”, according to Arthur C. Clarke. Opinions on artificial intelligence (AI) differ: some indeed describe it as magical, whereas others remain more skeptical. This skepticism can almost sound like a bad science fiction movie: “AI will take over the world and humans will lose all their power!” While this might seem ridiculous, some serious risks have been connected to AI innovations.

These risks have also been connected to reduction of freedom, which will be the subject of this dilemma. The importance of regulations for AI to ensure that individuals are protected from harm by uses of AI is something that has been stated before. This will also be emphasized in this article.

To allow this discussion to take place, consensus must be reached on the definition of freedom. Freedom is understood as ‘either having the ability to act or change without constraint or to possess the power and resources to fulfill one’s purposes unhindered’. This definition will be used in this article to argue that to prevent reduction of freedom in the future, there is a need for regulations on AI innovations.

Surveillance systems

Firstly, as was shown in the Netflix documentary Coded Bias, using AI technologies within surveillance systems comes with huge risks. The documentary explains that artificial ‘intelligence’ is based on data, which is a reflection of society, thus also reflecting biases within society. Netflix exemplifies a situation in London, where a new surveillance technique wrongfully picked black people out of a crowd, because the system labeled them as ’wanted’. They were not. One could argue “Well, as time passes we improve our data, biases will be reduced and this issue will be solved”. But even if that were true, using AI in surveillance technologies still marks a ‘dangerous step’ for human rights and privacy rights, as Amnesty International states in an article by The Guardian. However good intentions might be, the automatization and massification of surveillance systems will be a risk for privacy, and thus for freedom. Therefore, we argue that AI innovations within surveillance systems should be regulated to reduce risks, as it can allow for a freedom-restricting society, especially towards minorities.

Saving time but losing jobs

On a more positive view, a widely shared opinion is that AI can save us a lot of time. AI can take over data-driven tasks like data collection and analyses, which can consume a lot of time when done manually. Besides that, it can take off pressure on healthcare personnel by diagnosing diseases, patient monitoring, and providing treatment. It has been proven that AI is sometimes even better in detecting cancer than clinicians. This indicates an example of where AI could take over certain jobs to save time and ensure effectiveness. But what about white-collar jobs like HR assistant, marketing specialist and tax examiners? These are among one of the many jobs most likely to be replaced by AI. Mckinsey claims that about 800 million jobs could be gone by 2030. Certainly, the emerging influence of AI will also create a lot of new jobs, but losing your job and being forced to look for another is an implication of reduction of freedom. More layoffs and unemployment of people who need to retrain to another industry in order to find a job, takes freedom away and it will create stress. Having no work is not considered as being free, since work is one of the main purposes of human beings. Therefore, it is important to ask ourselves if certain jobs really need to be replaced in order to save time and money and regulations on this should be made.

Risks of a filter bubble

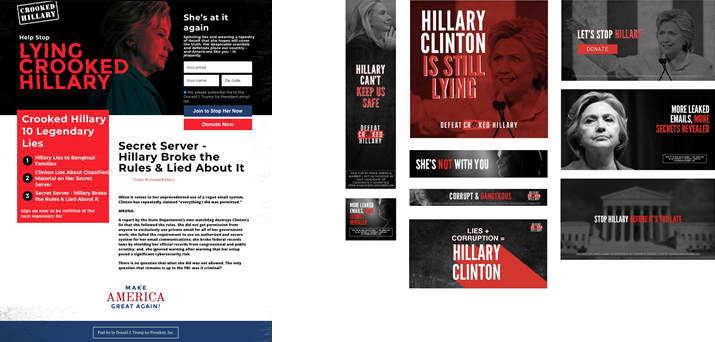

Furthermore, AI can limit our view on issues such as freedom of making our own decisions. We live in a world where personalized advertisements and recommendations are common. Those personalized advertisements can be seen as a positive expansion of AI, because all of the sudden you get recommended a pair of shoes that you were looking for or a podcast on a subject you were talking about this morning. However, these ads contain downfalls. The more someone reads certain types of articles for example, the more these types of articles get recommended to this person. This is also known as the filter bubble. Filter bubbles alter the way we encounter information and ideas and it will eventually result in a very narrowed view according to Forbes. The documentary of The Great Hack on Netflix shows the famous Cambridge Analytica Scandal of the Election process in 2016 and the major impacts such a narrow view can have.

Cambridge Analytica used personalized pro Trump and con Hillary advertisements to target people’s feelings and persuaded them to vote for Trump. At the end, the voters are accountable for actually voting for Trump but it can be argued on whether it was a free choice to begin with? Especially if one is continuously spammed with pro Trump information. It is not a choice out of free will, but out of unconscious manipulation. It has been argued that if we don’t take action, AI systems such as chat bots can seriously endanger our democracy. This is a large thread for freedom of speech and therefore requires regulation to prevent it from happening.

Loss of creativity

One could argue that artificial intelligence will only be an extension of society, and not a limitation. After all, AI could be described as ‘creative’, as it can produce its own work. Take Dall-E 2 or ChatGPT, which can create art, stories and code. Musicians are already using AI technologies to improve their music, according to Forbes. Both for creating and enhancing their work. However, this argument does not hold. AI can only reproduce based on existing data, therefore it can never truly do something truly creative and new. In an extensive meta review, Walia concludes creativity exists of three elements: (1) it is a key ability of individual(s), (2) it presumes an intentional activity and (3) the process occurs within a specific context. Whereas AI technologies might abide by the first element, as it does perform the act of creating something, it does not align with the other elements. The second element describes creativity as a production and not a reproduction. However, AI can only ‘create’ based on assimilating its input, which is thus reproduction. The third element states creativity is based on producing an equilibrium: something that does not conform with societal standards. This is inherently impossible with AI technology, as its input data is based on these standards and it can therefore only produce products that conform with society. Based on this analysis of creativity, it can be concluded that AI technologies cannot be truly creative. It can only reproduce based on its input, which can actually be described as plagiarism. Therefore, AI adds nothing creative to society and actually takes away freedom of creators by plagiarizing their work.

Conclusion

It can be concluded that although AI does have a lot of potential benefits, its pitfalls must not be forgotten. AI increases the risk of a loss of freedom by reducing privacy due to surveillance systems, taking away jobs and being forced to retrain to another industry, taking away free will by creating a filter bubble, and restraining freedom of creators by plagiarism. Since AI innovations are only developing further, there is a need for regulations in order to limit risks of reduced freedom in the future.