Personal assistants, translation programs, automatic answers to emails, filtering spam, getting the best-suited recommendations on Netflix, this list can continue for as long as we can imagine. Artificial intelligence is already in many applications we use daily, and it is increasing with the day. The applications think with us; help us; and make our work efficient, faster, and more accurate. It is almost impossible to deny that it makes our lives easier. What we can discuss is whether it makes people more vulnerable with the day. Vulnerability can be in many different aspects as long as it is about being open to attack or damage or capable of being physically or emotionally wounded. We will consider four of those various aspects.

addiction – loss of agility –

The first aspect of vulnerability is something we can see in our everyday life. Close your eyes and think about the last time you boarded public transport. What do you remember? Do you remember that most people were watching their screen, unable to keep their eyes away from it? Or don’t you remember it because you were one of them? We are already almost unable to be independent of the growing technology while the integration is in baby shoes. Can you imagine that we will become independent, less addicted, and more skillful when it is even more integrated into our lives?

We can not deny that AI helps in certain aspects. It can fill people’s weaknesses and make them feel stronger; one example is making human beings quicker, powerful, and more intelligent with AI implants of genes. We can come up with more and more illustrations that make humans “stronger” in some aspects. However, this does not change the fact that we still become dependent on them. What if AI stops working? What will the meaning of life be without them? Do we then become miserable? Will we lose our competence to be able to develop ourselves?

Others argue that we can be the “modern” Athenians with AI as modern slaves. While they work for us and do the most critical tasks, we will have more time to do things we love the most. Ancient Athens did not work at all and spent their time on leisurely activities they liked to do. They are well known for their philosophers, who still significantly impact our modern-day lives. They also spent time exercising and being concerned about democracy.

That sounds like a utopia! Doing only the things you love and being happy, without worry about the fulfillment of essential tasks. But the purpose of life is not about being happy.

“The purpose of life is not to be happy. It is to be useful, to be honorable, to be compassionate, to have it make some difference that you have lived and lived well.”

Ralph Waldo Emerson

With these words of Ralph Waldo Emerson, essayist and influential thinker from the US, we can see a perspective for human beings’ purpose in life. Creating things that lead to those purposes is possible with hard work. Philosophy, exercising, or being concerned about democracy is also only possible by hard work, thinking deeply, motivating yourself, and self-development. So the Athenians did a job that fulfilled the purpose of life from the perspective of Ralph. But will we be able to do this, or while we think AI is our slave are we his?

In our perspective, this second case is most likely one if we do not strengthen our will. You probably heard of the problematic behavior caused by the released dopamine or instead “I can not concentrate..”. Dopamine is one of our essential neurotransmitters that, for example, ensure that we eat when we are hungry. Tech companies use this neurotransmitter to serve them by understanding the cause of dopamine surges and implementing them in their algorithms. While reading a book for an hour is arduous, scrolling through someone’s Instagram feed for the same duration is more comfortable. Reading a book also produces dopamine but declines in time, which is not the case for social media which creates constant new products and thus new dopamine. The problematic part is the continuous attention switch between all the things on the internet, causing dopaminergic firing.

To create things, we first need to analyze and use all of our mental skills after that. The troublesome part is paying attention, concentrating, and knowing yourself. In short, there is a need for discipline when creating something. Having fun while working hard and simultaneously fulfilling our life purpose, while on the other hand AI slaves do dirty and difficult labor, is challenging because of the attention switch. If we cannot do work that needs concentration, if our activities become regular, and if we do not take part in mental activities, our brain will slowly lose its thinking ability and so its skills.

new ways of hacking

Another aspect of vulnerability, besides the addiction and the dependence aspect, is the growing connection of devices. Every device becomes “smart” these days, thanks to AI. While hacking was only possible with a couple of devices, this is not the case anymore. This growing connected network opens new ways for the new generation of thieves or people with other malicious intentions. Going out of their warm house like the traditional thieves is no longer the case. Just grab a cup of coffee; being smart and lots of exercise is almost enough. Some will argue that security improves, or we will create security bots. Do hackers not become skilled too? The security is never perfect; there is always a possibility to hack a way out of it. Some just need extra time and effort.

Due to more devices being connected and the possibility of AI automation gained by hackers, they could increase the scale of attacks. Nowadays, attackers rely mostly on smaller workforces or small automation to coordinate their attacks. But if they gain full machine learning and AI capabilities, they can simplify labor-intensive attacks and, even worse, recruit massive amounts of bots powered with machine learning. We believe that this will be a problem in the early future because machine learning systems are fragile. While burglary with less damage has a high possibility of being arrested, those attacks with new algorithms in malware can become less detectable!

abuse of power

AI does have advantages; for example, it can make daily, routine tasks easier for us human beings. On the contrary, this implies that we are becoming dependent on the upcoming technologies, as discussed earlier. Another aspect of vulnerability is the abuse of power.

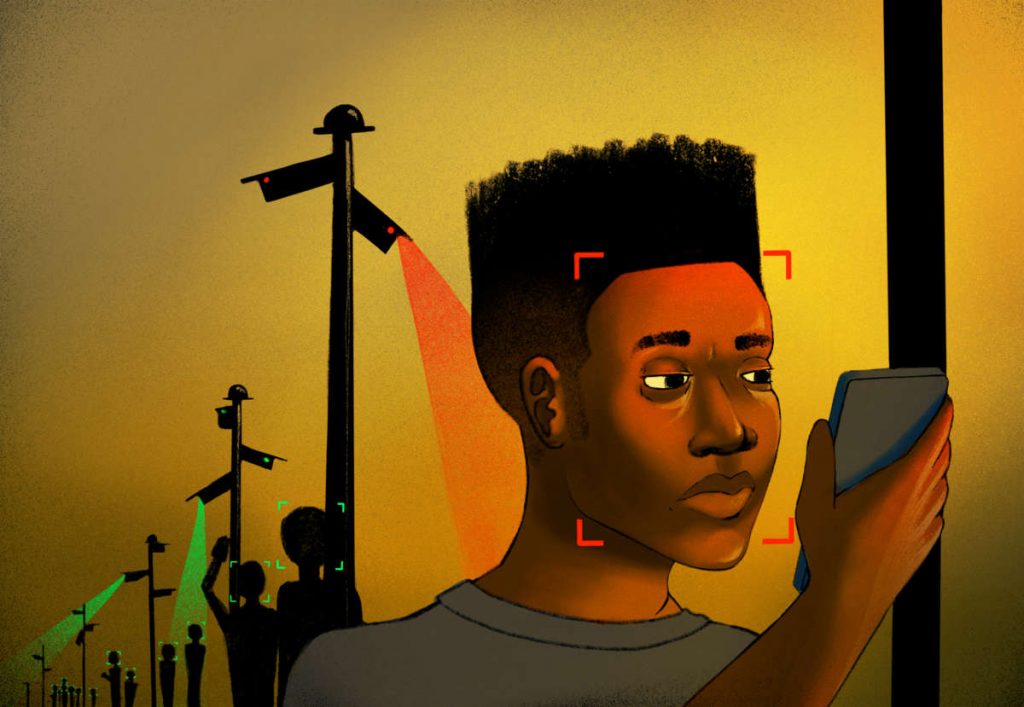

Think of killer robots, facial recognition systems, and constant surveillance by authoritarian regimes; this can cause serious trouble for citizens if used to target specific populations in society. AI can lead to discrimination and biases like racial or gender-based discrimination. All these aspects considered could eventually lead to a real threat to our democracy.

AI is often invisibly coded with human biases, states Kate Crawford, a Microsoft researcher. She claims: “Just as we are seeing a step function increase in the spread of AI, something else is happening: the rise of ultra-nationalism, rightwing authoritarianism and fascism.” Human beings feed AI systems and algorithms; we consider this as one of the critical problems with AI because they decide what is right and wrong. For instance, in the controversial Chinese research performed by Xiaolin Wu and Xi Zhang. They argue to have developed a bias-free system that can predict if someone is a criminal based on his/her facial features. They did this by training the algorithm on Chinese government ID photos of criminals and noncriminals. From there, they tried to identify predictive features. In our opinion, this is morally and ethically wrong.

Claiming that a system is bias-free must take a lot of courage, but most of the time, it is to mislead people from the truth. A system can never be bias-free if trained with human-generated data because it is always present in the datasets. Also, in this specific research, the training data was based on Chinese citizens only. By testing one ethnic group, we don’t think it is justifiable to conclude that the system can recognize criminals. Imagine if the Chinese citizens marked as criminals have a similar facial feature as a white European. If this European person were to enter China and such a system scanned his face, he would be judged unfairly by the system and classified as a criminal. This type of misclassification causes discrimination against humans. Selecting and predicting human behavior based on facial features is used to justify the unjustifiable. If we consider the recent historical event – World War 2-, where Jews, Roma, and other ethnic minorities were registered and targeted, thrown into concentration camps, and in the worst-case eradicated based on their physical appearance and religious beliefs.

Knowing that AI is used to register, predict behavior, and make decisions based on facial features is very disturbing. What will happen if the data falls into the wrong hands? What will an authoritarian regime like China do with the data? These are all unanswered questions, but there is only one proper answer if we think clearly about it. The ones in power use AI to keep and increase their power. So there will become one powerful group within the society, in this case, the government.

In countries with an authoritarian regime, AI systems assist the government with domestic control and surveillance. Some could maybe argue that mass surveillance is a good thing because it will prevent the country from crime, social unrest, and terrorist attacks. However, these are legitimate reasons, which we understand, there are other solutions but AI to guarantee a country’s national security. Mass surveillance is criticized because it interferes with privacy rights, limits political rights and freedoms, e.g., freedom of speech. Every move or action an individual undertakes is registered, including text messages, phone calls, social media posts, and emails. An individual considered a threat to the government can be tracked down and uncovered rapidly due to AI systems. In a democratic country, these practices will threaten democracy because it interferes with fundamental human rights

privacy & legislation

Protection of privacy and human rights are essential to consider when dealing with AI. AI can create new personal data based on previously gathered data of an individual. This data is created and provided without the consent and knowledge of an individual. We think this is disturbing, as this looks like companies are stealing individuals data to make themselves more powerful. Nowadays, consumers are becoming more aware of how their private information is used and collected. However, when downloading an application on your phone or visiting a website, you have to give your consent; otherwise, you can not continue. What you are giving your consent to is most of the time not clearly stated and written in small print.

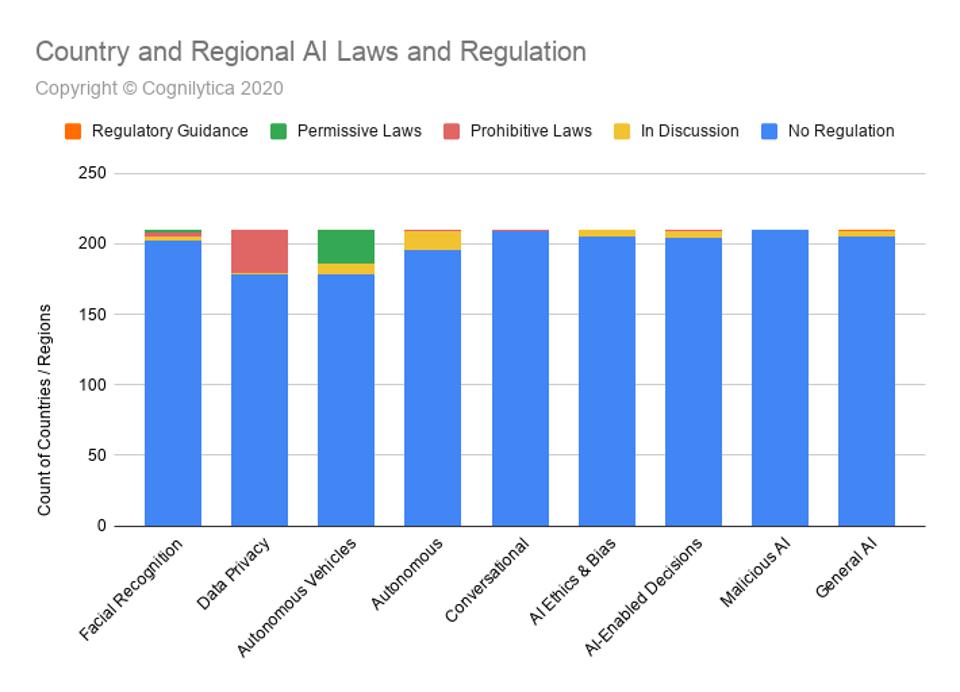

To minimize potential concerns, risks and dangers, it is crucial that there will be AI laws and legislation. Unfortunately, most governments are adopting a wait and see approach. They do this because it is hard to predict how a new technology will be used or abused. For lawmakers, it is still too early to see how AI will impact citizens and society. A research firm called Cognilytica has published a report on worldwide AI laws and regulations. It shows the latest legal and regulatory actions on several AI areas that are taken by different countries. In the graph below, you can see that there are no regulations on the various AI fields for the most part. For autonomous vehicles, there are permissive laws in some (<50) countries; this means that there is still a lot to discuss and consider in most parts of the world. In the category of Data Privacy, you see prohibitive laws; these are the laws and regulations such as we have in our GDPR (General Data Protection Regulation).

conclusion

In conclusion, we can say that AI might have been created with the right intentions; we see that there are so many negative aspects. It makes humans vulnerable, dependent, more hackable, abusable by powerful parties, and causes an invasion of privacy. Even though these negative future scenarios, AI is still growing on a daily basis. It is almost impossible to put a stop to it. What we need to do is steering it in the right direction. Our first advice is for (future) AI developers, think about your purposes, is what you create genuinely needed? What kind of effects will it have on human beings? Do not create something for fun or just to make a lot of money with it. Our second piece of advice is for the officials of international organizations and governments. Protect your fellow man and your descendants. These developments without special legislation make the rich more prosperous and more powerful while the poor become more impoverished and vulnerable. Lastly, a call to all the people: please be aware of the recent developments, educate yourself, train your willpower, know your rights and use the developments if you actually need them.

NOTE: source of thumbnail, other image sources are implemented as a hyperlink