In 2018, the world was made aware of the fact that an unknown corporation called Cambridge Analytica had harvested over 87 million user profiles from Facebook through a whistleblower in what was dubbed as one of the biggest data breach of the year. This data was then used to create individual psychological profiles to get the voters to vote for the Republican candidate Ted Cruz and then later was used to help elect Donald Trump as the 45th President of the United States.

This was sadly not an isolated incident and as the years go by, more and more such incidents of data leaks and misuse of user data has been coming into light by various corporations. These data that are collected are not used for the betterment of the users but for the profitability of a corporation.

Artificial Intelligence or AI has started to play a central part in all this as more and more advancement in technology is introduced. These AI affect every single aspect of your digital life and in this pandemic time, your digital life might have actually been your life as people are forced to do all their activities from their homes through the internet. From giving you automatic tagging of people in any photos you might upload on Facebook to understanding your sentiments towards a certain brand using sentiment analysis, AI is used widely by corporations of all shapes and sizes.

Why should I be worried about my privacy? I don’t have anything to hide.

In a TED talk given by Glenn Greenwald, a journalist, on why privacy matters, he puts forward a simple question to someone when he is asked why a person should worry about privacy if they have nothing to hide to which he replies:

“Here’s my email address. What I want you to do when you get home is email me the passwords to all of your email accounts, not just the nice, respectable work one in your name, but all of them, because I want to be able to just troll through what it is you’re doing online, read what I want to read and publish whatever I find interesting. After all, if you’re not a bad person, if you’re doing nothing wrong, you should have nothing to hide.“

Evidently, not a single person has taken him up on his offer yet.

From the moment you login into Facebook or Instagram or Google or any other digital app, your data is immediately logged and then stored by these corporations. Shadow profiles are created where a lot of user sensitive information is stored ranging from your likes and dislikes to what kind of search you might have done on the internet. This data can then be used to either mimic you or target you specifically with the use of an AI.

The data stored from the users are also being sold by these corporations to other corporations or other third parties that do not have the best intentions of their users in their minds.

The safeguarding does not come from just corporations but even governments too, who have started using AI and the data gathered by them to create racial profiling systems that so far have caused more damage than good and has also influenced decisions made by humans in certain cases.

What role AI plays in all this ?

Our world is undergoing an information Big Bang, in which the universe of data doubles every two years and quintillions of bytes of data are generated every day. Let us learn this in a simpler manner and and take an example of big data. Impact of Big data is described in three V’s. More data makes analysis more powerful. Variety adds to this power and enables new and unanticipated inferences and predictions. And velocity facilitates analysis as well as sharing in real time. AI will only accelerate this trend. Much of the private data analyzed today are done by machine learning and decisions by algorithm. So, as AI will evolve, it will magnify the ability to use personal data or information in ways that can intrude on privacy interests by raising analysis of personal information to new levels of power and speed.

But couldn’t data (and AI) be used for good?

The thing is, AI is neutered without the presence of data. Data is one of the things that propel an AI and this causes major issues with making sure that this technology sees better research and development in the future. Due to the COVID-19 pandemic, multiple papers were released that discussed the use of AI to detect and possibly prevent the rise of similar and help fight pandemics. But, this would require the presence of a lot of data collected throughout a country or the world to help combat it better. But, then the issue arises when this data collection should be stopped or prevented in the first place and how data erasure should be handled. Though, there are provisions in certain laws set by countries, at the end it depends on how well it is handled or disposed of after the passing off such events.

Legal & Compliance teams are being established to handle the privacy issues that arise when working with big data or AI. Companies like Cape Privacy have also started using open source components for their developers to use. Some, like Facebook, have also started to adopt the GDPR across the entire world for the companies.

What has been done to rectify this?

Over the last week, nearly 2 billion people around the world who use WhatsApp, Facebook’s instant messaging service, were greeted with a giant pop-up that read:

“WhatsApp is updating its terms and privacy policy.”

This was a 4000 word privacy policy, which said that WhatsApp will begin to share your phone numbers, IP addresses, and payments made through the app with Facebook and other Facebook-owned platforms like Instagram. After this incident, Elon Musk, CEO Tesla and SpaceX tweeted “USE SIGNAL”. But, this is not the first time when Facebook is involved in privacy related arguments , we all know about antitrust hearings of 2019 and 2020. Not only Facebook, even Google have been accused of such practices. So now the question is, what to do? Do we need some new privacy laws? Or Do we need a regulatory body to regulate the activities of these tech giants?

Over 80 countries and independent territories, including nearly every country in Europe and many in Latin America and the Caribbean, Asia, and Africa, have now adopted comprehensive data protection laws.These laws are based on fair information practice guidelines developed by the U.S. Department for Health, Education and Welfare (HEW) (later renamed Department of Health & Human Services (HHS)).

General Data Protection Regulation

GDPR, is a regulation in EU law of data protection and privacy. It also refers to the transfer of personal data outside the EU/EEA. GDPR was approved by the European Parliament in April 2016 and the official published in all of the official languages of the EU in May 2016. The legislation came into force across the European Union on 25 May 2018. This law applies to organizations working in the EU, as well as any organizations outside the EU which offer goods or services to customers or businesses in the EU. That ultimately means that almost every major corporation in the world needs GDPR. Organizations have to ensure that personal data they are collecting is gathered legally and under strict conditions and those who are collecting and manage it are obliged to protect it from misuse and exploitation, as well as to respect the rights of data owners or face penalties for not doing so.

Personal Information Protection and Electronic Documents Act

PIPEDA came into existence in 2001 for private bodies which were federally regulated. All other organizations were included on 1 January 2004. PIPEDA specifies the rules to govern collection, use, or disclosure of the personal information in the course of recognizing the right of privacy of individuals with respect to their personal information. It also specifies the rules for the organizations to collect, use, and disclose personal information.

There has also been independent efforts by the people themselves in the form of non-profit organizations and websites that help others start being aware of their own privacy and security online. Organizations such as the Electronic Frontier Foundation and Privacy International are a few of the various entities that are pushing towards better privacy and security laws to be put in place.

There are also other smaller efforts available such as the following:

- Privacy Tools. A website that helps details various options to help you start using more privacy-centric alternatives to a lot of your daily digital apps and devices.

- Prism Break. Lists applications for different mediums including Android & iOS and extending all the way to desktop operating systems.

- Privacy centric subreddits. A forum run by like minded people who help each other get off of privacy abusing services and help others be aware of the rules broken by these tech giants. r/privacy, r/degoogle are a few of the well known examples of the same.

- Signal. A non-profit messaging system that is endorsed by Edward Snowden, a privacy advocate and whistleblower along with others such as Bruce Schneier, a world renowned security technologist.

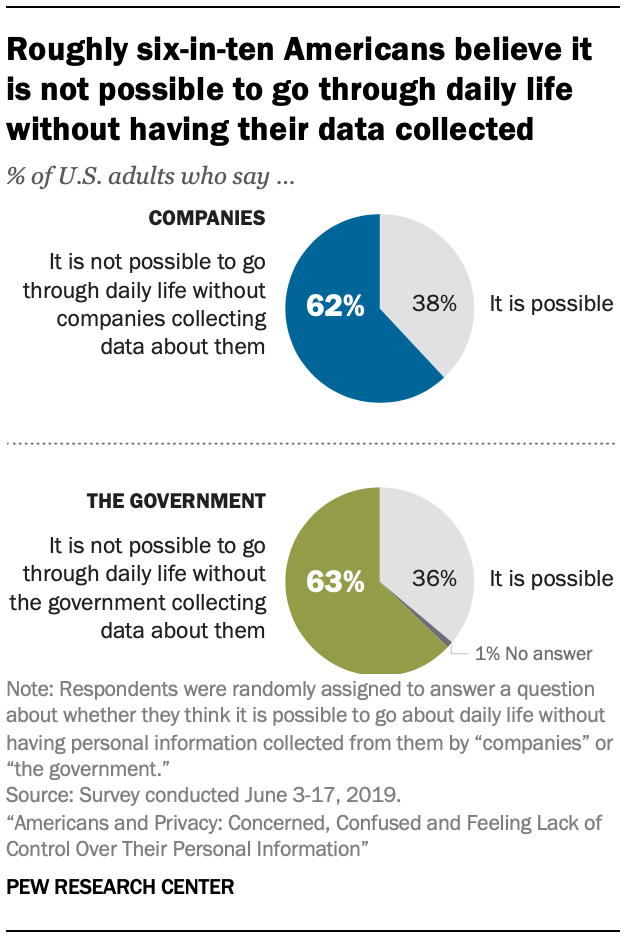

Some other countries like the Philippines, Brazil and the USA and many other organizations are also taking steps regarding privacy and security. But, still we encounter an abundance of data breaches, privacy scams and people think they are not safe enough in this digital era. Despite having these laws people across the globe are concerned, confused and feeling lack of control over their personal information. According to a research conducted by Pew Research Center, 6 out of 10 Americans believe that it is not even possible to go through daily life without having their data collected.

So, what’s next ?

Daily, we are growing as a digital world, and with a new day pace is also increasing. According to Dataportal, as of 1st Jan 2021, the number of people using social media is over 4.20 billion worldwide and still increasing with a speed of around 15 users per second. Facebook has 2.740 billion users, Youtube potential advertising reach is 2.291 billions and WhatsApp has 2 billion active users per month and this number will keep on increasing as more and more population gets on the internet in the future. With this, the privacy issues will also increase and might start cropping up in regions where there is now law whatsoever and might result in a huge crisis that otherwise could have been solved easily before it turned into a problem for everyone.

So, we want to provide a few possible solutions to this issue:

Reduction of Black Box AI.

Black Box AI are those AI that do not provide any explanations as to why a certain decision was achieved as a result. These black box AI can make it difficult to discover problematic areas if this kind of AI is ever attacked by bad actors or find if the system has some kind of inbuilt bias in it or if it provides any kind of bad output to the users.

Establishment of a privacy governing body.

Establishment of a specialized world agency would help furthering the agenda of digital privacy and security. This body would be similar to the World Health Assembly (WHA) where all the member states of the United Nations would be a part of this body and help in shaping a standard for digital privacy and security that would be enforced globally to ensure corporations or governments would not be allowed to misuse the data taken from the people.

In an ideal world, researchers and data scientists with no affiliation to any corporations would be the members who would work as representatives for this body and to decide the laws that have to be put in place.

Consumer Co-Operatives.

A rather radical idea is to have these huge corporations be transformed into a co-operative that ensures that the corporation does not misuse the data.

Teaching AI to forget.

A bit more plausible solution is by having corporations adopt a method for allowing the AI to forget data to ensure not only privacy but also ensure global security. Luckily, researchers have already started working on this idea with some progress already seemingly made.

Conclusion

Almost ten year ago, there was an algorithm based on the purchase patterns of people which used to tell whether they were present or not. Based on this they used to send them coupons on their address. This type of action was a bit problematic, especially in an instance when a young woman hadn’t yet told her pregnancy to her family. The choice to send information is an ethical issue, one that business often handles badly. The more important question is what businesses can know about individuals, EU’s GDPR or Canada’s PIPEDA or California’s CCPA are just the beginning. Neither of them are perfect and both will evolve, but privacy laws will expand.

But laws are evidently not going to be enough, a conscious effort needs to be made to ensure that AI is not a cause or a reason for breaches in privacy of any individuals simply for the sake of profiting. Because, as time goes on and technology advances, the line between digital privacy and security starts to vanish, turning them into one single thing which is dis-regarded might result in a dystopian future where humans would no longer have any value and just become a herd which produce data to feed the great machines.